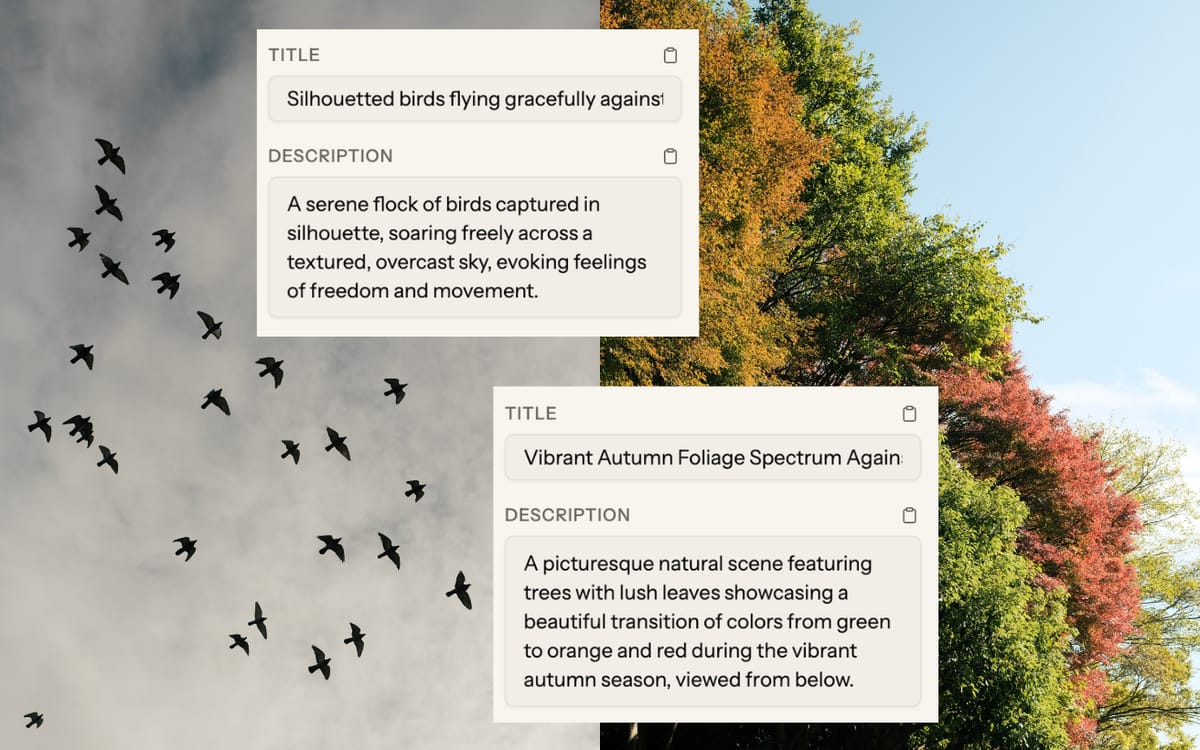

Vision AI models have gotten genuinely good at describing images. In a lot of cases they're better than contributors who've been staring at their own photos for hours and have stopped seeing them clearly.

"Better than a tired photographer" is a lower bar than "accurate enough to submit without checking," though. So here's an honest look at where AI holds up and where it still needs a human in the loop.

How vision AI actually works (briefly)

Modern AI metadata tools use large vision models — systems trained on enormous datasets of images and text together. When you send a photo, the model analyzes the visual content and generates descriptive text based on what it sees.

The thing that matters most: the AI is reading the actual image, not the filename. A tool generating metadata from filenames alone ("photo_2024_03_15_DSC_9847.jpg") is guessing, not analyzing. Vision-based tools can tell that a photo contains a golden retriever (not just "dog"), warm autumn tones, a hiking trail in the woods, and a mood of quiet solitude — because they can see it.

This is what Picseta does. It sends your images to Gemini Flash, a multimodal AI model, and generates titles, descriptions, and keywords based on what's actually in the photo.

Where AI is reliable

Object and scene identification

This is the strongest area. Identifying that a photo contains a laptop, coffee cup, desk plant, and natural window lighting — and generating keywords for all of it — AI handles at least as well as a careful human reviewer. It doesn't get distracted. Doesn't overlook the plant in the corner. Doesn't forget to tag the material of the desk surface because it's been doing this for three hours already.

For studio shots, product photos, nature imagery, food photography, and most commercial stock content, AI keyword coverage is comprehensive.

Color and mood tags

Color identification is almost trivially accurate. Mood and feeling tags — peaceful, energetic, nostalgic, tense — are also handled well. Vision models have been trained on so much image-caption data that they've learned the relationship between visual cues and emotional language.

This matters because feeling keywords are the most skipped category among contributors and among the most searched by buyers. AI generates them unprompted.

Consistent output quality

Manual keywording degrades over a long session. After your 20th photo, you're less thorough, more likely to skip secondary details. AI quality doesn't drop with volume. Your 100th image in a batch gets the same treatment as the first.

Where you still need to look

Specific locations

AI can tell that a photo was taken outdoors in a Mediterranean-looking city. It can't tell you it's Valletta, Malta rather than somewhere in southern Italy or Greece. If your photo documents a specific, identifiable location, add or verify location keywords yourself.

Editorial context

AI doesn't know what was happening when you pressed the shutter. If you photographed a rally in a specific city on a specific date, those details have to come from you. The editorial description format Shutterstock requires — city, country, date, description — can't be generated without that information.

Brand names and trademarks

AI models are trained to avoid naming trademarked brands, even when those brands are clearly visible in an image. A photo with a Nike logo in frame might get "sportswear" or "athletic clothing," not "Nike." For most stock submissions this is fine (and probably legally safer). For product photography where brand specificity matters, you'll need to add those terms manually.

Medical and scientific content

A surgical procedure photo will be identified as a medical scene. Whether AI correctly names the specific instruments or distinguishes between surgical specialties is less reliable. If your portfolio includes specialized medical or scientific content, those keywords need close review before submitting.

Submission type

AI can't know whether your subjects signed model releases. It also can't know whether a photo was shot in a controlled studio or at a real newsworthy event. Commercial vs. editorial is your call, every time.

The actual workflow

Treat AI as doing a thorough first draft, then review before submitting. For most commercial stock photos, the process looks like this:

- Upload your batch and let AI generate all metadata at once

- Scan titles — make sure they read like captions, not keyword lists

- Verify submission type for each photo

- Add location specifics where the image has an identifiable place

- Quick scan of keywords for anything that doesn't fit (rare for good vision AI, but worth 10 seconds)

- Submit

For a batch of 20 photos, this takes 10–20 minutes. The same batch done manually from scratch takes 2–3 hours.

How much time does it save?

A single stock photo done properly — title, 35+ keywords, description — takes most contributors 8–15 minutes. At that rate, 100 photos is somewhere between 13 and 25 hours of metadata work. That's a lot of hours that aren't shooting.

With AI and review, per-photo time drops to 1–3 minutes for typical commercial content. The same 100-photo batch becomes 1.5–5 hours.

For contributors uploading regularly, the difference is whether metadata stays manageable or becomes the bottleneck that limits how much you publish.

See our guide on batch keywording workflows for how to structure larger uploads.

Can AI replace manual keywording for stock photos?

AI handles the bulk of it well — subjects, colors, mood, actions, setting. The gaps are specific locations, editorial context, submission type decisions, and specialized domain knowledge. Think of it as doing the first 80% rather than replacing the process entirely.

How accurate is AI-generated stock photo metadata?

For standard commercial stock content — people in everyday situations, nature, food, objects — accuracy is high. It drops for images that require context from outside the image itself (specific locations, current events) or specialized knowledge (plant species, surgical instruments). And unlike humans, it doesn't have off days. Image 100 in a batch gets the same attention as image 1.

What should I check before submitting AI-generated metadata?

Five things: location specifics (AI defaults to generic when an identifiable location is visible), editorial description format (the date and city/country have to come from you), submission type (commercial vs. editorial depends on release status, not image content), keyword relevance (scan for anything that doesn't match the actual photo), and title readability (should read like a caption, not a keyword list).